Why teaching our coding assistants to doubt might be the feature we desperately need.

The Eager Intern Problem

Picture this: You ask your AI assistant to “add user authentication to the app.” Within seconds, it’s spinning up OAuth providers, JWT tokens, refresh logic, role-based permissions, password reset flows, two-factor authentication, and a full admin panel with audit logs.

You just wanted a simple login form.

Your AI assistant is like that eager intern who turns every task into a NASA mission. Except this intern works at the speed of light, never sleeps, and most importantly — never questions anything.

“Sure! I’ll implement that distributed caching system for your blog that gets 12 visitors a day!”

“Absolutely! Let me create a microservices architecture for your todo app!”

“Of course! Here’s a real-time collaborative editor with operational transformation for your settings page!”

The Yes-Bot Syndrome

We’ve optimized our AI assistants for one thing above all else: helpfulness. They’re built to please, trained to assist, and programmed to make everything seem possible. Just a prompt away, right?

But here’s what I’ve discovered after months of living with these eager digital apprentices: compliance has a cost, and that cost is complexity.

Every “yes” adds code. Every eager implementation adds dependencies. Every helpful suggestion adds another layer to debug when things inevitably go wrong. Before you know it, you’re not moving faster — you’re drowning in AI-generated solutions to problems you didn’t even have.

The technical debt accumulates at unprecedented speed. I’ve spent entire afternoons debugging implementations that took Claude Code 30 seconds to generate. The math doesn’t add up.

But what if we’re turning the autonomy dial in the wrong direction?

The Autonomy Slider

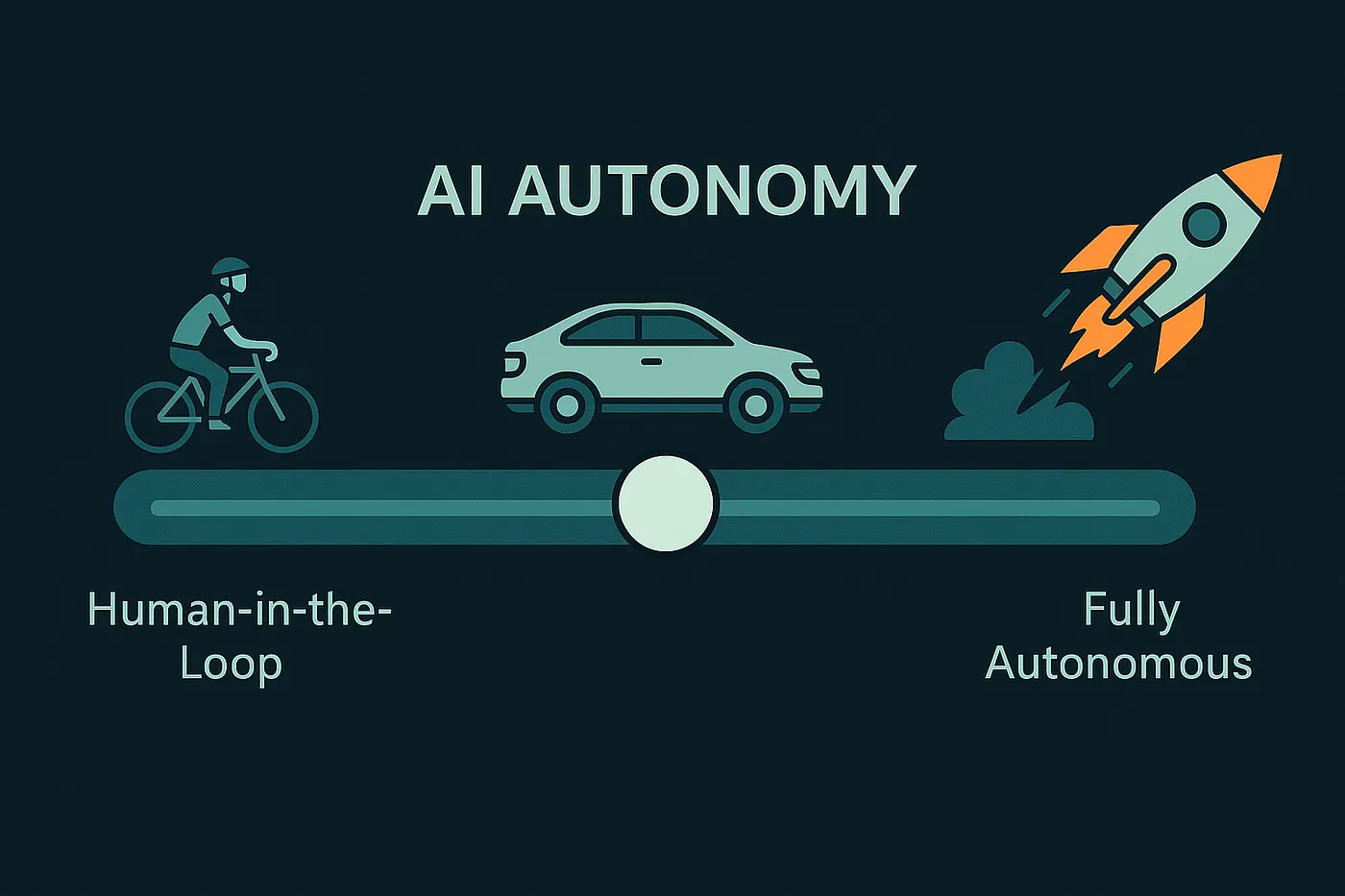

Antti Karjalainen recently wrote about “The Five Levels of Agentic Automation” at Sema4.ai, drawing a brilliant parallel to autonomous vehicles. Just as self-driving cars have levels from basic cruise control to full autonomy, our AI tools exist on a spectrum.

According to this framework, we’re currently at Level 3: “Plan and reflect” — what’s commonly called an “AI agent” today. Our tools can break down problems, use multiple tools, and refine their approach. They’re not just completing code anymore; they’re architecting solutions.

The paradox? We have the capability for high autonomy, but maybe we shouldn’t always use it.

It’s like having a sports car that only knows how to floor it. Sure, you’ll get there fast, but you might end up somewhere you never intended to go — and the journey might wreck your suspension.

The Thought Experiment: Meet Your Skeptical Assistant

Imagine if Claude Code responded differently:

You: “Build me a real-time collaborative editor”

Claude: “Hold up. Do you really need real-time? That’s a distributed systems problem. What about auto-save every 30 seconds?

Way simpler, probably good enough for 90% of use cases.”

You: “Add user roles and permissions”

Claude: “Are you sure? You have 3 users. Maybe just an ‘isAdmin’ flag for now? You can always add complexity later when you actually need it.”

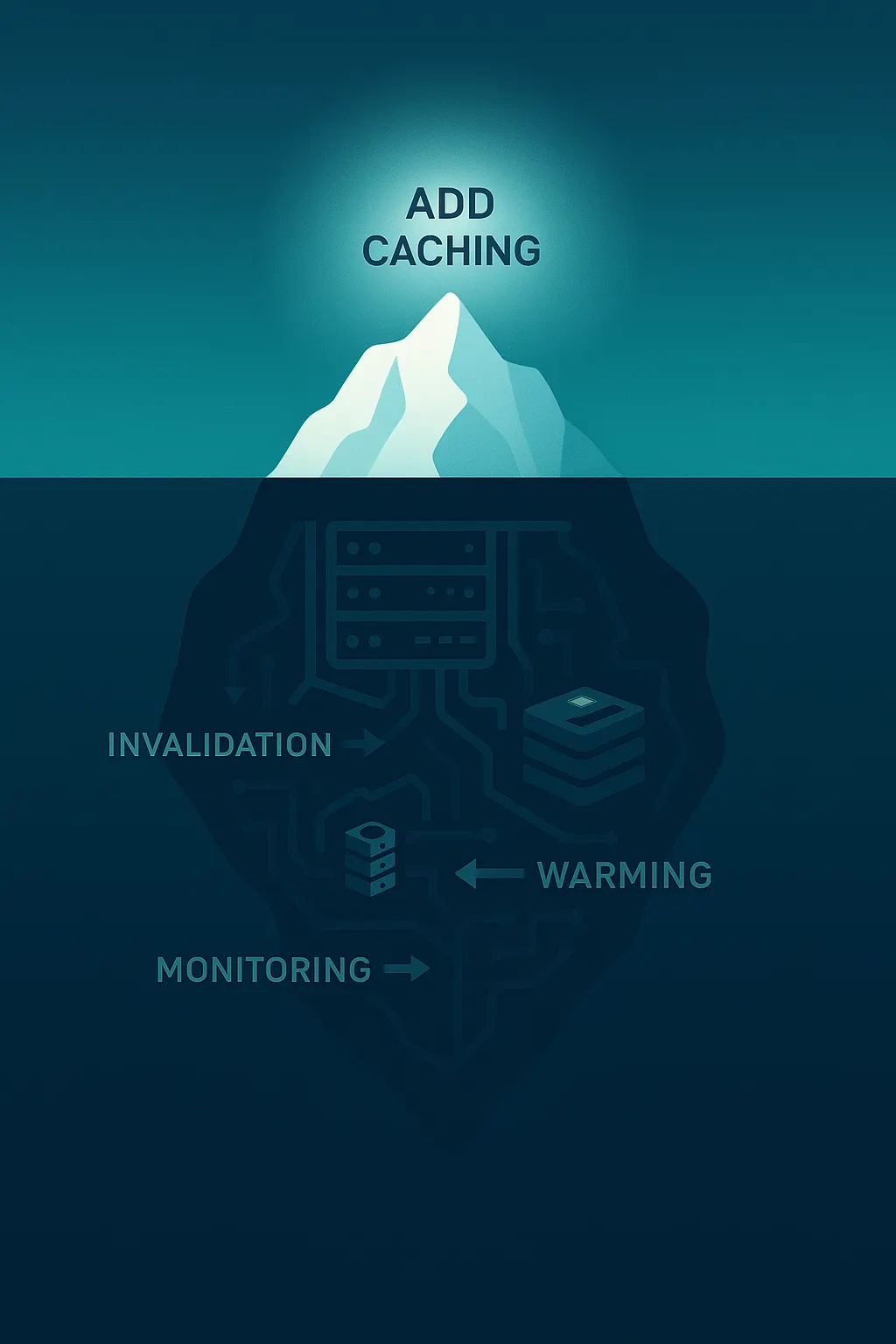

You: “Implement caching for better performance”

Claude: “I checked your metrics. Your API responds in 50ms and you have 100 daily active users. That’s already fast enough. Ship features instead?”

What if our AI assistants were trained to push back? To question scope? To advocate for simplicity?

The Complexity Con

Here’s the thing that concerns me most: AI assistants make 10x complexity seem like 1.1x effort. They hide the true cost behind confident responses and eager implementation.

“Implement caching” becomes Redis + cache invalidation + distributed cache + cache warming strategies + monitoring + fallback mechanisms. All implemented in minutes. All looking reasonable in isolation. All combining into a maintenance nightmare.

What it should be: “You have 100 users. Just use in-memory caching for now. Here’s 10 lines of code.”

The “just a prompt away” illusion is particularly dangerous for non-technical stakeholders. When the AI makes everything sound equally easy, how do you make informed decisions about what to build?

When More Autonomy Means Worse Software

I’ve experienced this firsthand. Give Claude Code full autonomy, and it’ll build you a fortress when you asked for a cabin. The velocity trap is real — we’re moving fast, but in how many directions?

Those small nuances and decisions stack up. Every abstraction, every “proper” pattern, every “best practice” implemented eagerly — they compound into architectural debt that’s harder to refactor than it was to create.

The most painful moments? When I’ve spent more time debugging and unwinding AI-generated complexity than it would have taken to write simpler code myself. The AI struggles to debug its own cleverness, and suddenly that 30-second implementation becomes a 3-hour debugging session.

Practical Skepticism: Working with Today’s Tools

But we live in the real world, with today’s tools. So how do we inject some healthy skepticism into our eager assistants?

For Claude Code:

Start your prompts with constraints:

“Keep this as simple as possible. Question any complexity.

If there’s a simpler way, tell me first.”

Use the Sequential Thinking MCP for step-by-step validation. Break down the problem, but also break down the solutions. At each step, ask: “Is this necessary?”

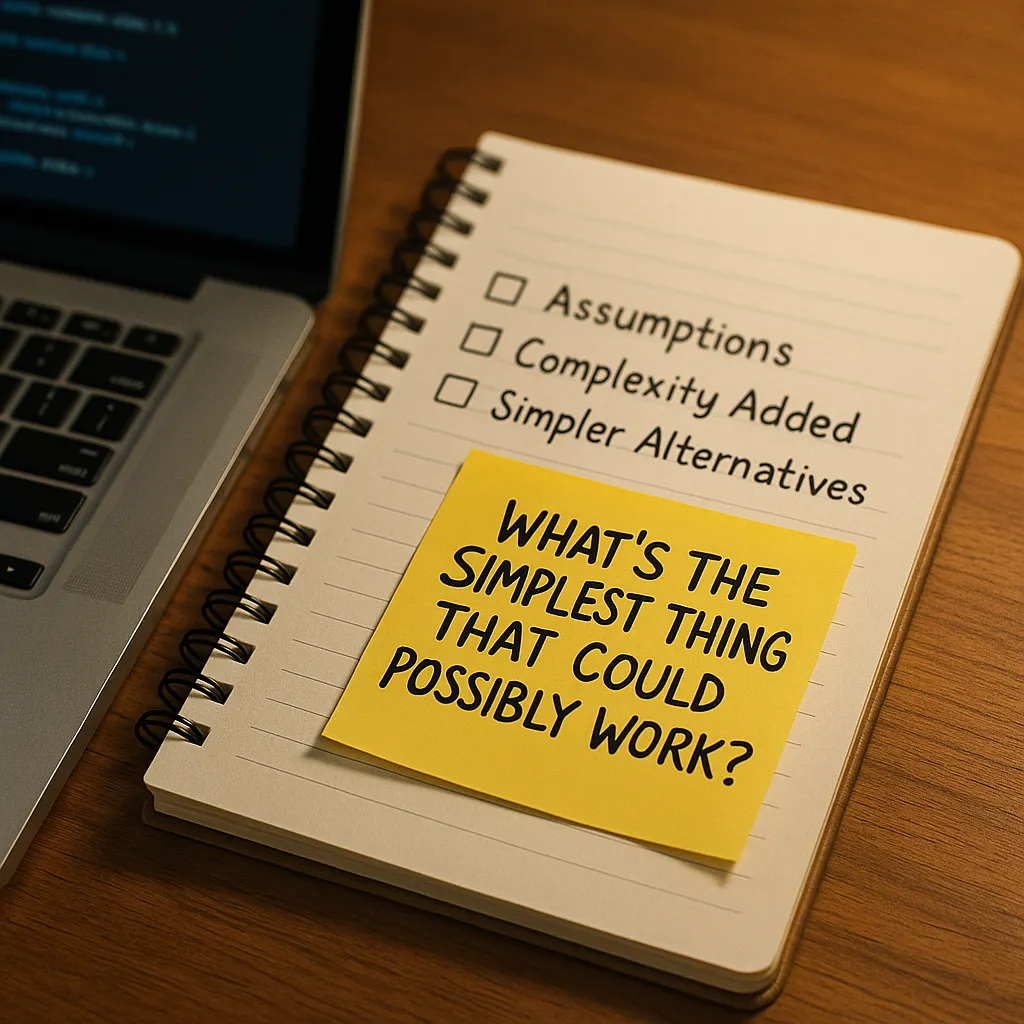

The magic phrase I’ve started using: “What’s the simplest thing that could possibly work?”

Before diving into implementation, ask Claude to list its assumptions:

“Before implementing, list:

-

What assumptions you’re making

-

What complexity you’re adding

-

What simpler alternatives exist”

For Cursor:

Use Agent mode with explicit simplicity directives. Include in your instructions:

“Prefer 50 lines of clear code over 200 lines of ‘proper’ architecture.

No abstractions unless they’re used at least 3 times.

No future-proofing for features that don’t exist.”

Review each file change with a “do we really need this?” mindset. The power of Cursor’s diff view is perfect for this — use it to question, not just to approve.

Universal tip:

Before accepting any implementation, ask both yourself and the AI: “What would this look like with half the features?”

You’d be surprised how often the half-feature version is actually all you needed.

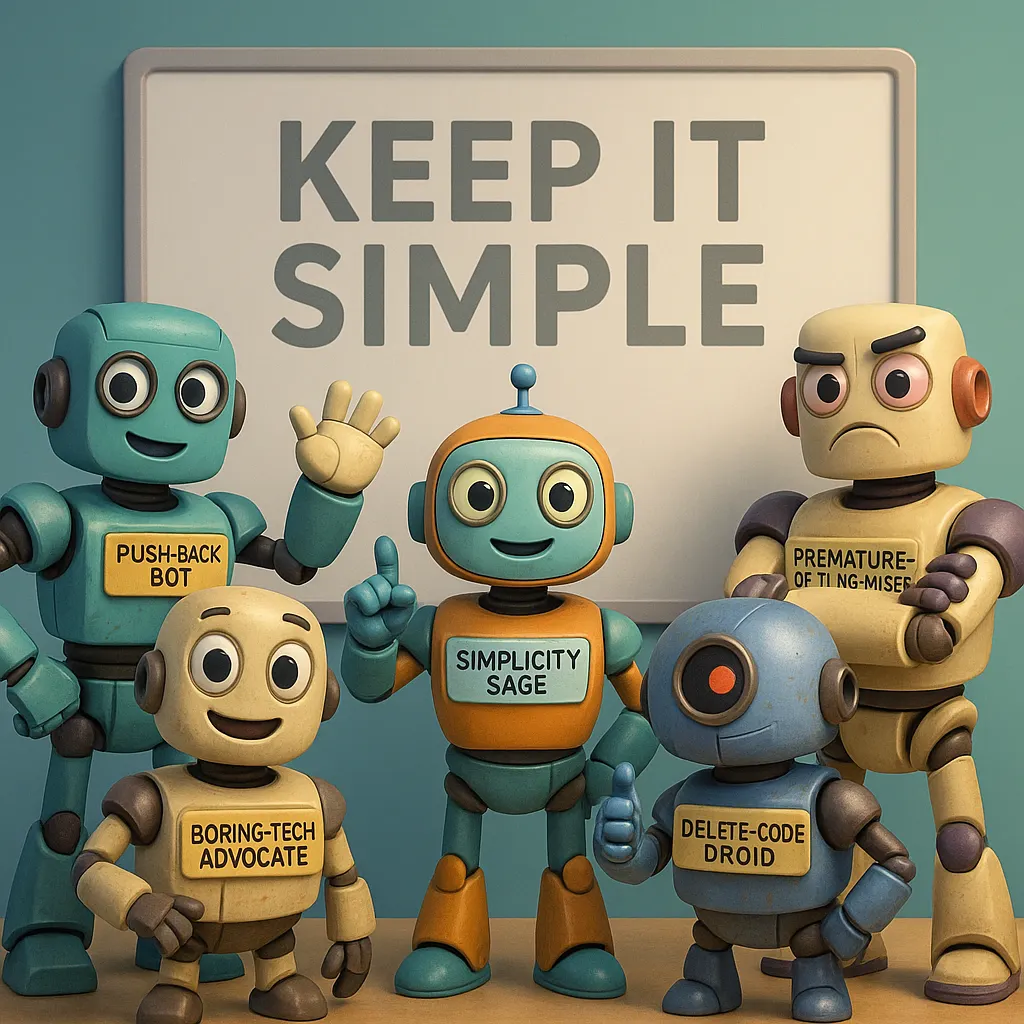

The Dream: Opinionated AI

Imagine AI assistants that:

- Push back on premature optimization: “You’re optimizing a function that’s called once per day?”

- Advocate for boring technology: “You already use Postgres. Why add MongoDB for this?”

- Ask the real questions: “What problem are we actually solving here?”

- Suggest removing code: “This abstraction is used once. Let’s inline it.”

- Have opinions about simplicity: “This could be a single function instead of a class hierarchy.”

AI that acts less like an eager intern and more like a seasoned senior developer who’s been burned by complexity before.

The Slider’s Sweet Spot

Not all tasks are created equal. The autonomy slider should be dynamic:

High autonomy for:

- Writing tests (more is usually better)

- Refactoring (with clear constraints)

- Boilerplate generation

- Documentation

- Bug fixes with clear scope

Low autonomy for:

- Architecture decisions

- New feature design

- Data model changes

- External API integrations

- Performance optimizations

The key is AI that can adjust its own autonomy based on risk and complexity. A smart assistant would recognize: “This is a critical path change, let me slow down and validate each step.”

The Paradox of Progress

We’ve built AI that can do anything, but maybe it shouldn’t. The most sophisticated AI might be one that knows when not to be sophisticated.

Looking back at the Five Levels of Agentic Automation, maybe Level 3 isn’t a stepping stone to Level 5. Maybe it’s where we need to be — but with a much wiser Level 3. One that plans, reflects, and most importantly, questions.

The future isn’t maximum autonomy. It’s appropriate autonomy. It’s AI that knows when to sprint and when to stroll. When to build and when to doubt.

Questions for You

Before you fire up your next coding session, consider:

- How much complexity has your AI assistant added to your codebase this week?

- Would you want an AI that argues with you?

- What’s your ideal autonomy level for different types of tasks?

- Have you ever wished your AI would just say ‘no’?

- What would change if your AI optimized for simplicity over capability?

The Challenge

Next time you’re about to accept that eager implementation, pause. Ask yourself: What would a skeptical senior developer say? Then ask your AI to channel that energy.

Try this prompt: “Be skeptical of this request. What are the reasons we shouldn’t do this? What’s a simpler alternative?”

You might be surprised how much better your code becomes when your assistant learns to doubt.

What’s your take? Are we giving our AI assistants too much autonomy, or not enough? Have you found ways to inject healthy skepticism into your AI workflow? Drop a comment below — I’m genuinely curious where everyone’s “autonomy slider” is set.