Remember that feeling when your AI coding assistant starts “helping” by creating an infinite loop of errors? You fix one thing, it breaks two more. You revert a change, it suggests the same broken solution. Before you know it, you’re 47 error messages deep, your terminal looks like the Matrix, and you’re seriously considering going back to vim.

I call it the AI coding death spiral, and if you’ve spent any time with Claude Code, Cursor, or any other AI coding assistant, you know exactly what I’m talking about.

The Universal Problem Nobody Talks About

Here’s the thing: This happens to everyone. It doesn’t matter if you’re using Claude Code, Cursor, or GitHub Copilot. It doesn’t matter if you’re writing Python, JavaScript, or Rust. The death spiral is a fundamental characteristic of current LLMs.

Why? Because these models are pattern-matching machines that will confidently hallucinate solutions that feel right but don’t actually exist. They’ll import libraries that sound plausible, call methods that should exist, and create elegant solutions to problems that weren’t actually your problem.

The result? Hours lost in recursive debugging hell.

The Obvious (But Incomplete) Solutions

Sure, there are some basic guardrails you can set up:

- Unit tests — Great, but the AI will happily write tests that pass for code that doesn’t work

- Linting and formatting — Helpful, but syntactically correct garbage is still garbage

- Frequent validation — Better, but requires constant vigilance

Claude Code even has hooks for systematic improvement, which is fantastic. But these are band-aids on a deeper problem.

But without clear instructions, most AI coding agents will default to take a simple "build a TODO app" into a massive 50 thousand lines project.

The Real Solution: Teaching Your AI to Think Simple

Here’s where things get interesting. While doom-scrolling through YouTube at 2 AM (as one does), I stumbled upon Rich Hickey’s 2011 Strange Loop talk “Simple Made Easy.” If you haven’t seen it, it’s the kind of talk that makes you question everything about how you write code.

Hickey’s core insight: We conflate “simple” (one-fold, not interleaved) with “easy” (familiar, near at hand).

That’s when it hit me: AI coding assistants are optimized for “easy,” not “simple.”

They give you the familiar solution, the one that looks like code they’ve seen before. But familiar doesn’t mean correct, and it definitely doesn’t mean maintainable.

The Method: Converting Wisdom into Rules

Instead of fighting the AI’s nature, what if we could teach it better principles? Here’s the methodology I developed:

Step 1: Extract the Wisdom

Take any classic talk, blog post, or technical article with principles you value. For me, it was Hickey’s talk. I threw the YouTube link into Google’s NotebookLM, which generated comprehensive study notes. (Pro tip: NotebookLM can even create an AI podcast about the content, which is wild but that’s another blog post.)

Step 2: Create a Learning Environment

I created a Claude Desktop project specifically for this purpose. Into this project, I added:

- Deep research on writing effective rules for Claude Code

- The extracted study notes from NotebookLM

- Context about what makes good AI coding agent rules

Step 3: Generate Actionable Rules

With all this context, I prompted Claude to create specific rules based on Hickey’s principles. The result was simple-mindset.md - a set of guidelines that fundamentally changed how my AI assistant approaches problems.

Check out the github gist 🔗

Step 4: Deploy and Iterate

Add these rules to your project’s context, and watch your AI’s behavior transform.

What “Simple-Minded” AI Actually Looks Like

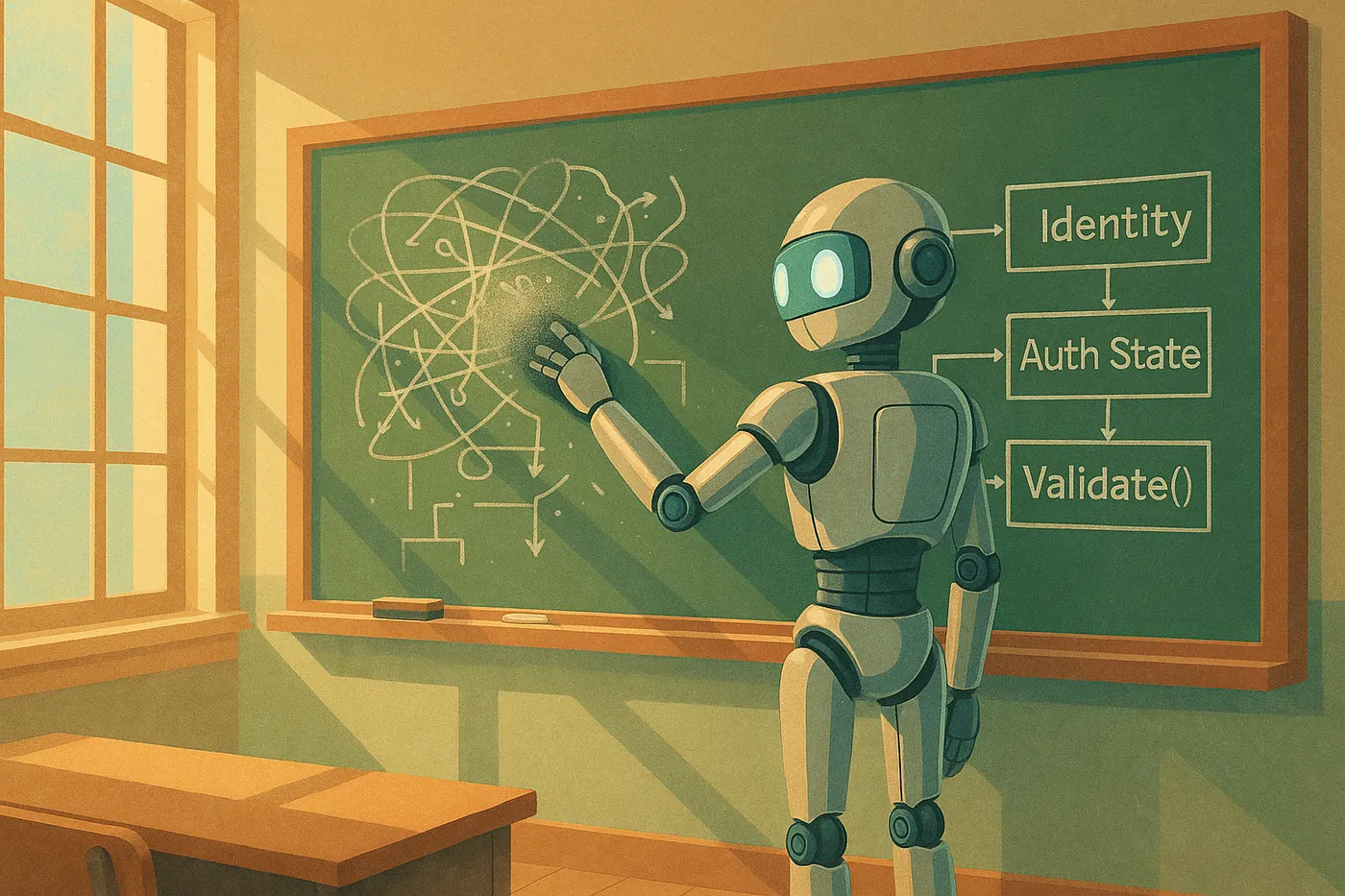

Here’s a taste of what’s in my simple-mindset.md:

Instead of: “Add user authentication to this app” Simple approach: “Create a user identity value. Create an authentication state. Create a function that validates credentials. Keep these separate.”

Instead of: Mixing data fetching, transformation, and UI rendering in one component Simple approach: Separate data sources, data transformations, and data presentation into distinct, composable units

The AI stops trying to be clever with complex, intertwined solutions. It starts building things that are boringly simple — and actually work.

The Broader Pattern

This isn’t just about Simple Made Easy. The real insight is that we can encode any timeless software principle into rules our AI assistants can follow.

Think about it:

- Kent Beck’s “Make it work, make it right, make it fast”

- The SOLID principles

- Domain-Driven Design concepts

Each of these can be transformed from abstract wisdom into concrete AI behavior.

Your Turn: Build Your Own AI Philosophy

Here’s the step-by-step:

- Find your source material — What technical talk or article fundamentally changed how you think about code?

- Extract the essence — Use NotebookLM or similar tools to create structured notes

- Create a rule-generation project — Set up a Claude Desktop project with context about good AI coding rules

- Generate your rules — Prompt your AI to create specific, actionable rules from the principles

- Test and refine — Use the rules in real projects and iterate

Beyond the Death Spiral

The death spiral isn’t going away. It’s a fundamental characteristic of how current LLMs work. But by encoding better thinking patterns into our AI assistants, we can:

- Catch problems before they compound

- Build systems that are actually maintainable

- Spend less time debugging and more time building

Most importantly, we can stop treating AI coding assistants as black boxes and start treating them as teachable partners.